Update October 2019:

Following the death of Molly Russel, Instagram has since then worked very hard to ban self-harm images on its platform.

Self-harm images now can rarely be seen on Instagram ( it can never 100% disappear). Instagram has even voewed to ban any drawing or memes relating to self-harm or suicide.

The death of Molly Russell has definitely had a big impact on social media companies.

While companies like Instagram is trying to help make its platform safer, it is important that parents and teacher understand how Instagram works, and where can users find such disturbing content on the platform.

pssst, Don’t forget to : check out the Growth Mindset Kit, designed to help children develop positive habits from a young age.

The Death Of Teen Girl Linked To Instagram

Recently, news broke out about Molly Russell, a 14-year-old who took her own life after viewing content linked to suicide on Instagram.

Her father reported to the BBC that he believed Instagram “helped kill my daughter”.

Molly Russell, 14, took her own life in 2017 after viewing disturbing content about suicide on Instagram and Pinterest.

Understanding online self-harm:

On another news relating to online self-harm The BBC reported that a 12-year-old girl, Libby, shared her fresh cuts with 8,000 followers on Instagram. She would even be adviced by her followers how to make cuts deeper to produce more blood.

When Libby’s father discovered her Instagram account, he was shocked to find comments from others suggesting her how to cut herself.

“You shouldn’t have done it this way, you should have done it like that. Don’t do it here, do it there because there’s more blood.”

In her interview, Libby said that it was easy to find a tribe that would do such things. She felt comfortable sharing her anxiety and self-harm tendencies with her online tribe.

In another interview I watched on the BBC, a girl reported that there are even Facebook groups dedicated to self-harm and they challenge each other on who has the “best” cuts.

It’s almost like glorifying self-harm that can lead to a feeling of addiction.

Is Instagram combating self-harm?

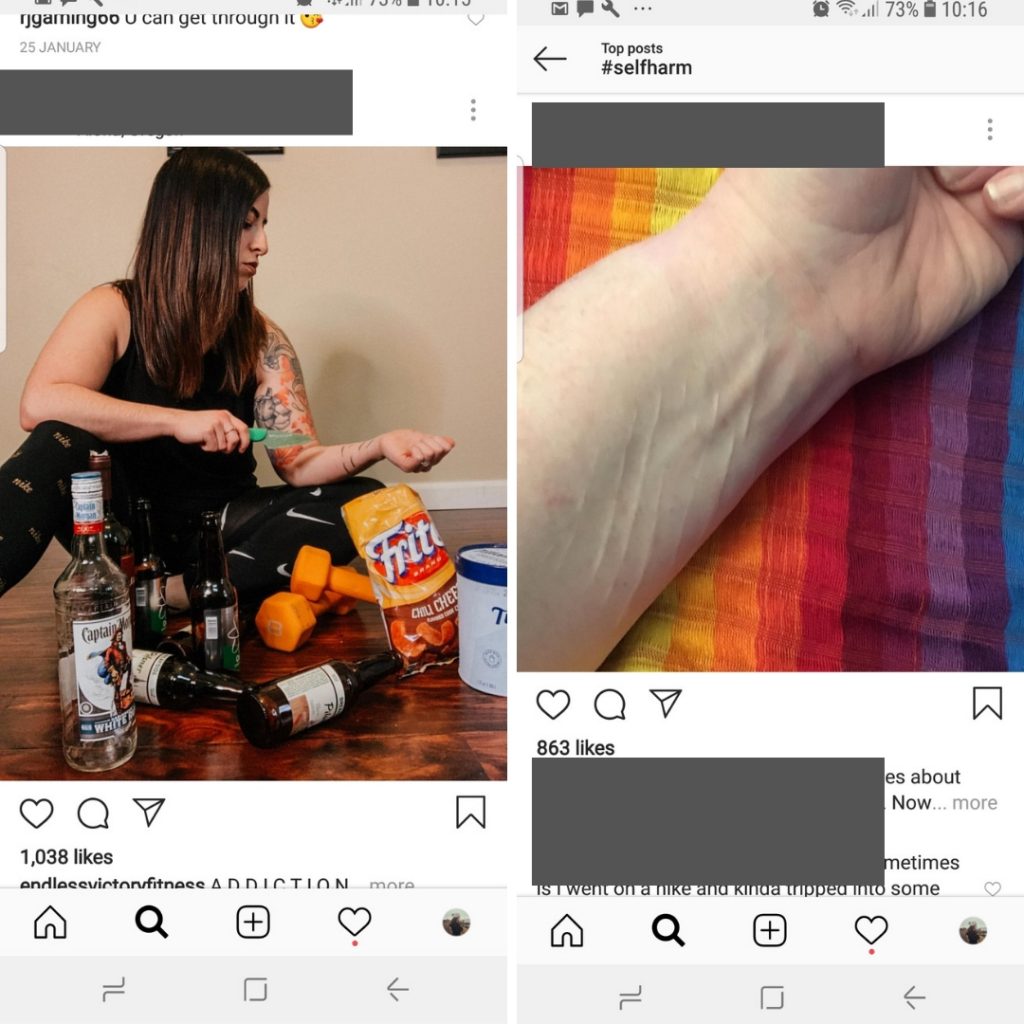

While writing this article, I can find self-harm images easily on Instagram by typing some obvious words relating to the act alone.

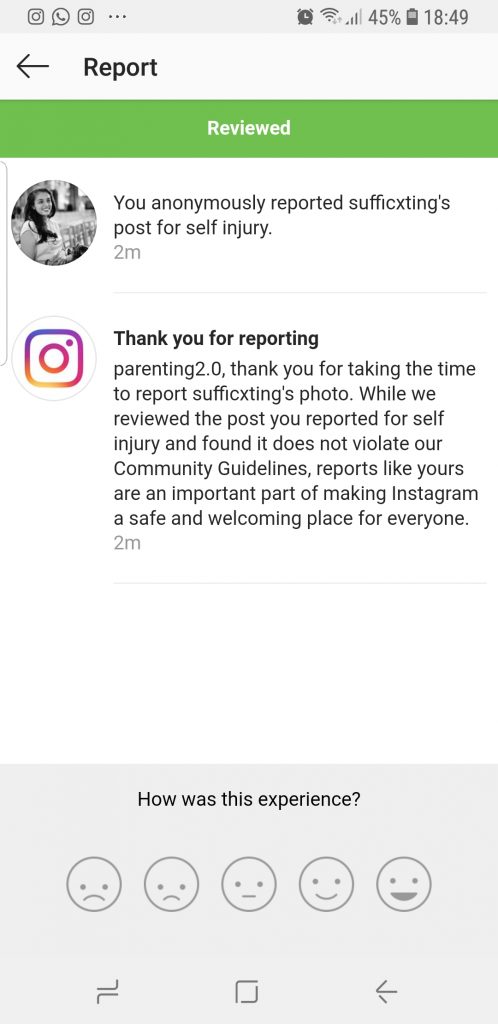

The worst is when I tried reporting the images I got a message saying that “it does not violate our community guidelines”.

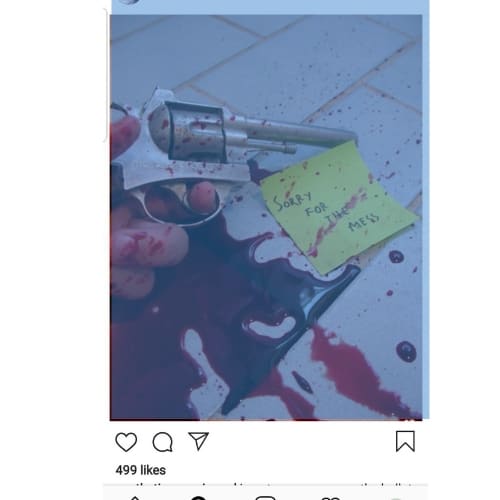

This is an example of one of the images I reported :

This is the reply from Instagram :

It is sad to see that such content is NOT considered as dangerous and violent for Instagram when they clearly have kids aged 13 yeard old using their platform.

Instagram states that discussing issues like this with others can help young people in combating the struggle and connect with others. While this statement is true it does not mean that Instagram should allow these images in it.

Maybe they can do it by allowing positive quotes of some kind that can help battle mental health issues.

Self-harm content that can be found on Instagram

Simple hashtag search such as #selfharm #cutting #depression brings out very dark images.

Every child on Instagram has access to these pictures.

5 ways to help your child use social media safely

1. Use Parental Control Software

Instagram has no built-in parental control features. The only way to do this is to install parental monitor into your child’s phone.

Also, no one under the age of 13-years-old should be using Instagram. Even though this is the age limit set, I would hold off letting them on social media for as long as possible.

Don’t forget to get this free copy

2. Follow your kids on social media and use the anti-bullying feature

Instagram has a feature where you can remove offensive comments from posts. However, this does not necessarily mean bullying is prevented.

Follow your kid’s account and also check their Instagram app to ensure they do not have a Finstagram (second or fake Instagram account)

3. Talk to your kids often about difficult subjects like self-harm, sexting, etc.

You might feel uncomfortable talking to your kids about difficult subjects like pornography, sexting, grooming, self-harm, etc . But if your kid is ready for a smartphone then you will have to talk about these topics and talk often.

Don’t forget to switch off their location setting on their mobile phone, check their privacy settings, check for Finsta account ( fake Instagram account).

4. Be involved in their online activities

Do not leave your child to wonder the online world themselves. Sit and browse with them together.

If your child is younger than 13 years old then let them know that they should not be on any social media platform.

If they are above 13 then inform them that you will be following their accounts and be monitoring their usage. Let your child know that the reason you are doing is not to spy on them but to keep them safe from harmful content.

Don’t forget to get this free copy

:Download the THE GROWTH MINDSET KIT helping children to stay calm and grounded even when growing up in a tech world.

5. Get help

If you think your child is going through some difficulties, don’t be scared to get some help. Contact your local charities, school, and counselors to get some guidance.

If your child has a social media account, then talk to them about why you think it is necessary for you to be monitoring their account.

Here are tips to you keep children safe in the digital age :

Tips for Non-Tech Savy Mums :4 tips to digital parenting for non-tech savy mums

Was this helpful?

Good job! Please give your positive feedback

How could we improve this post? Please Help us.